Autoscale Deployments run on cloud computing resources that scale up and down to efficiently handle the network traffic and workload of your Replit App. When your app is busy, autoscaling adds servers to manage the load. When your app is idle, it reduces the number to as low as zero to save you money. Autoscale Deployments are ideal for the following use cases:Documentation Index

Fetch the complete documentation index at: https://docs.replit.com/llms.txt

Use this file to discover all available pages before exploring further.

- Web applications that handle variable workloads and traffic such as ecommerce sites

- APIs and services

Features

Autoscale Deployment include the following features:- Automatic resource scaling: Automatically adjusts resources based on traffic patterns to optimize costs.

- Custom domains: Configure a custom domain or use a

<app-name>.replit.appURL to access your app. - Configurable limits: Set the maximum number of instances your published app can scale to.

- Flexible machine power: Choose the CPU and RAM configuration that meets your app’s needs.

- Monitoring: View logs and monitor your published app’s status.

Usage

You can access Autoscale Deployment in the Publishing Project Editor tool.How to access Autoscale Deployment

How to access Autoscale Deployment

From the left Tool dock:

- Select

All tools to see a list of Project Editor tools.

- Select

Publishing.

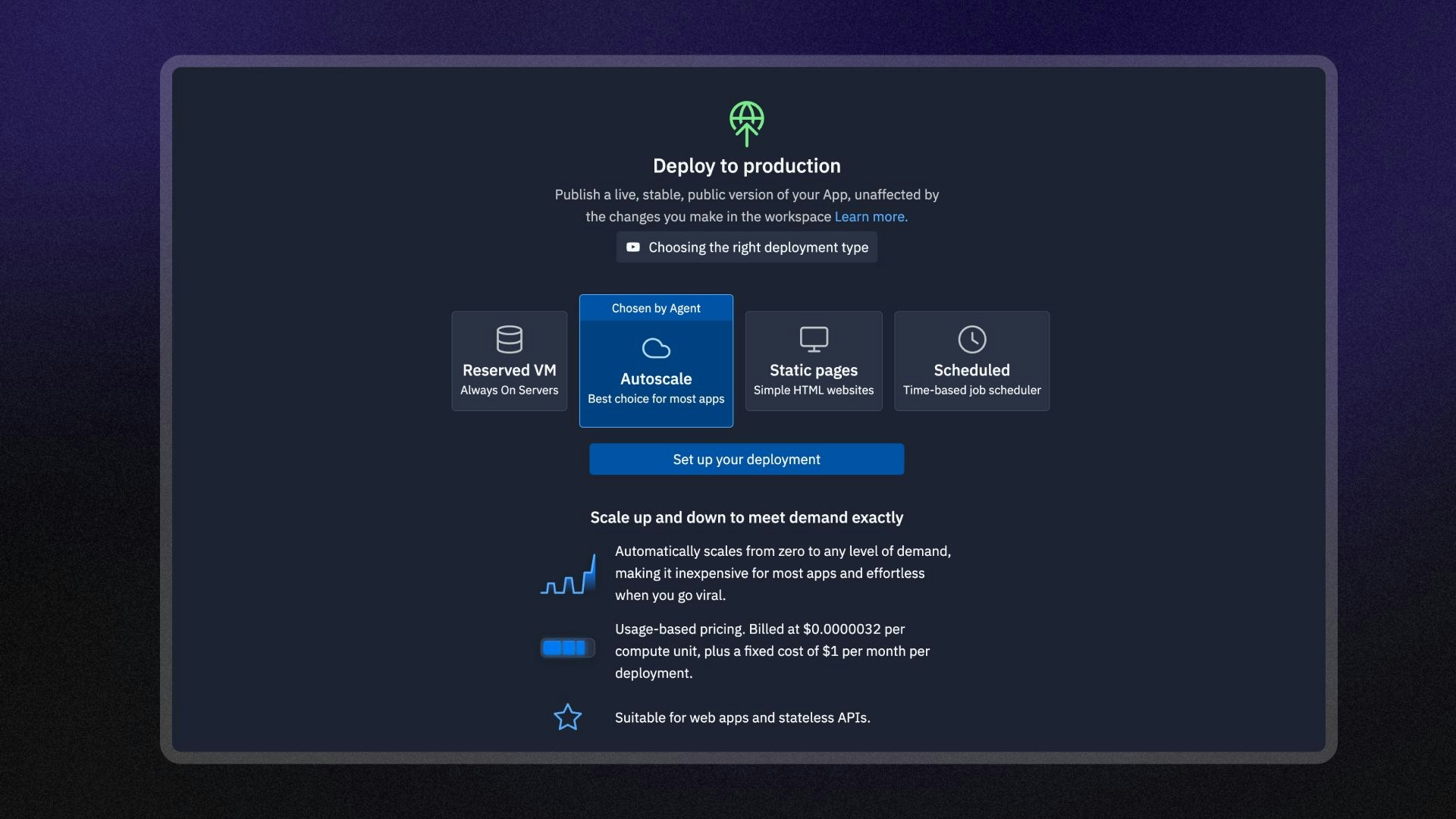

- Select the Autoscale option and then select Set up your published app.

- Select the

magnifying glass at the top to open the search tool

- Type “Publishing” to locate the tool and select it from the results.

- Select the Autoscale option and then select Set up your published app.

Machine power

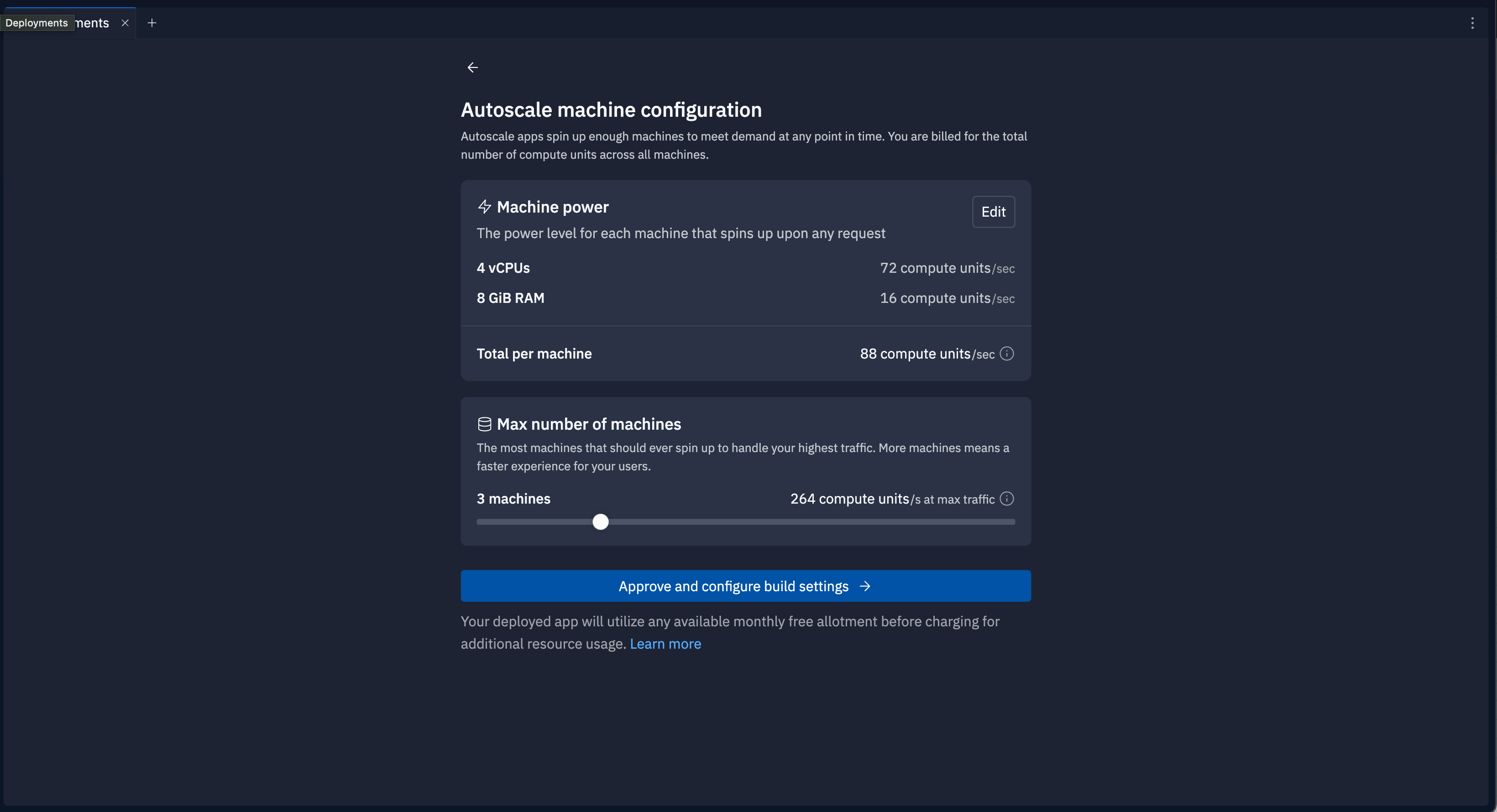

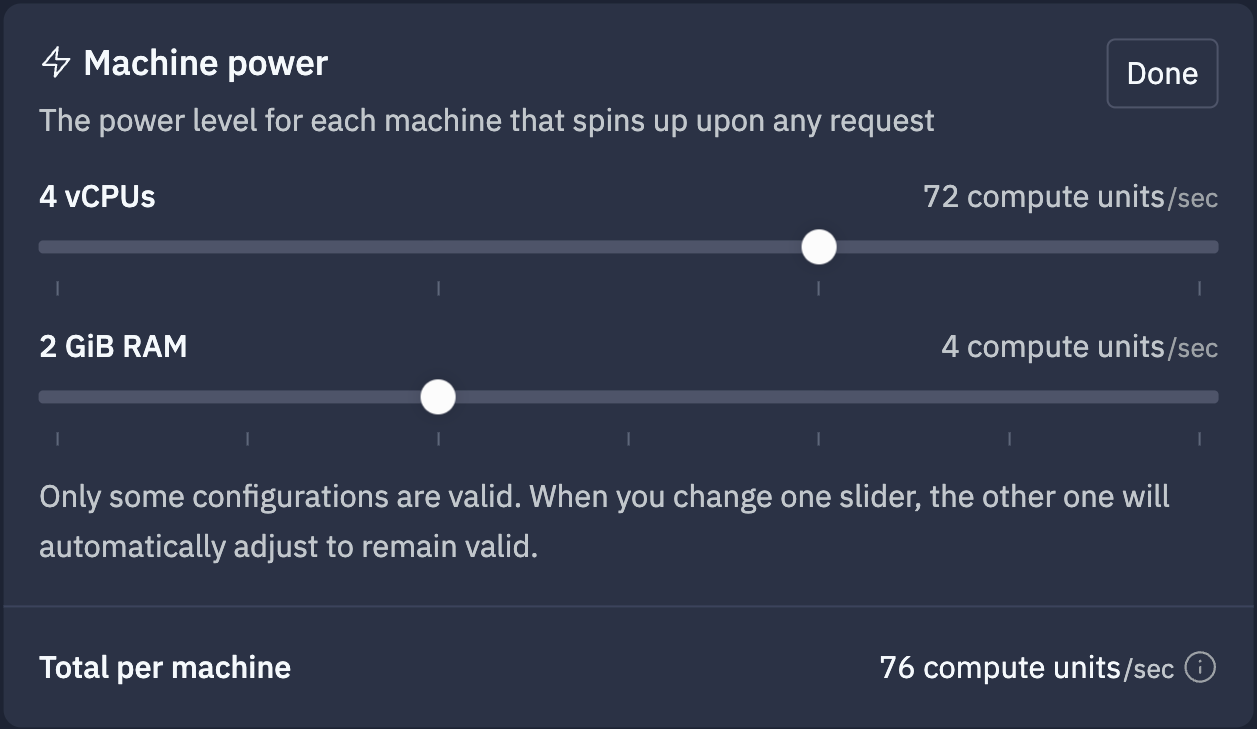

Select Edit to view and set the machine power options. Use the sliders to select the CPU and RAM configuration for each published app server instance. View the compute unit cost for the configuration in the Total per machine row. A compute unit is a measurement of cloud computing resources based on the memory and CPU configuration of the machine. To learn more about calculating the cost based on Compute Units, see Compute Units.

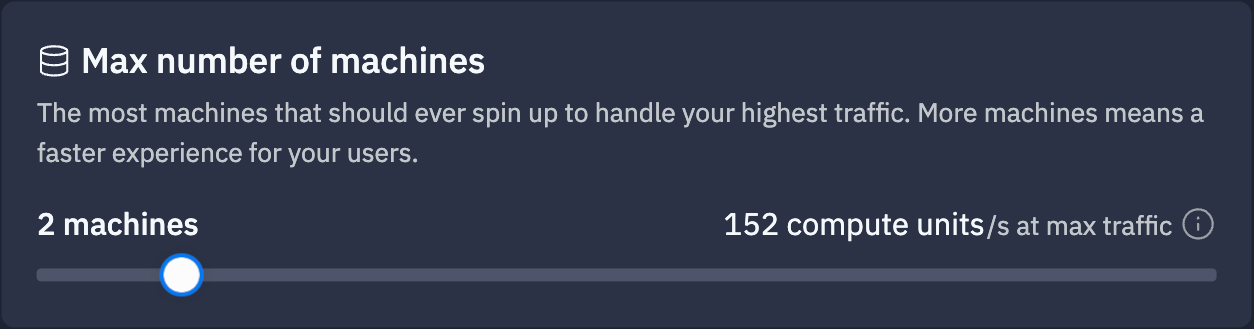

Max number of machines

Use the slider to adjust the maximum number of machines. This number is the upper limit of server instances the autoscaling feature can assign when it determines your app is busy. The bottom row shows the equivalent compute units, calculated by the following formula:Number of machines * compute units per machine

Next steps

- Published App Monitoring: Learn how to view logs and monitor your published app.

- Publishing costs: View the costs associated with publishing.

- Pricing: View the pricing and allowances for each plan type.

- Usage Allowances: Learn about scheduled deployment usage limits and billing units.